Good jokes, and good data communication, depend on the audience

Everyone knows that explaining a joke can wreck it. Over-explaining a data visualisation can have the same effect. The trick in both situations is that failing to explain can also sometimes be a problem. The skill is knowing which is which. Jokes often draw on things such as cultural references. An audience already familiar with the references will pick up on them and enjoy getting them. On the other hand, the same joke told to an audience with different cultural references (due to age, location, etc.) might fall completely flat. That second audience might either need some additional explanation or else a different joke.

The same type of audience awareness is important in data communication, though in that case what’s relevant is how knowledgeable the audience is about the domain or about data and analytics more generally. Previous posts have described the importance of making data communication accessible to general audiences that include people who are not particularly data-savvy, but what about the reverse? How do you tailor data communication to an audience with a relatively high level of data capabilities?

The strengths and weaknesses of bubble charts

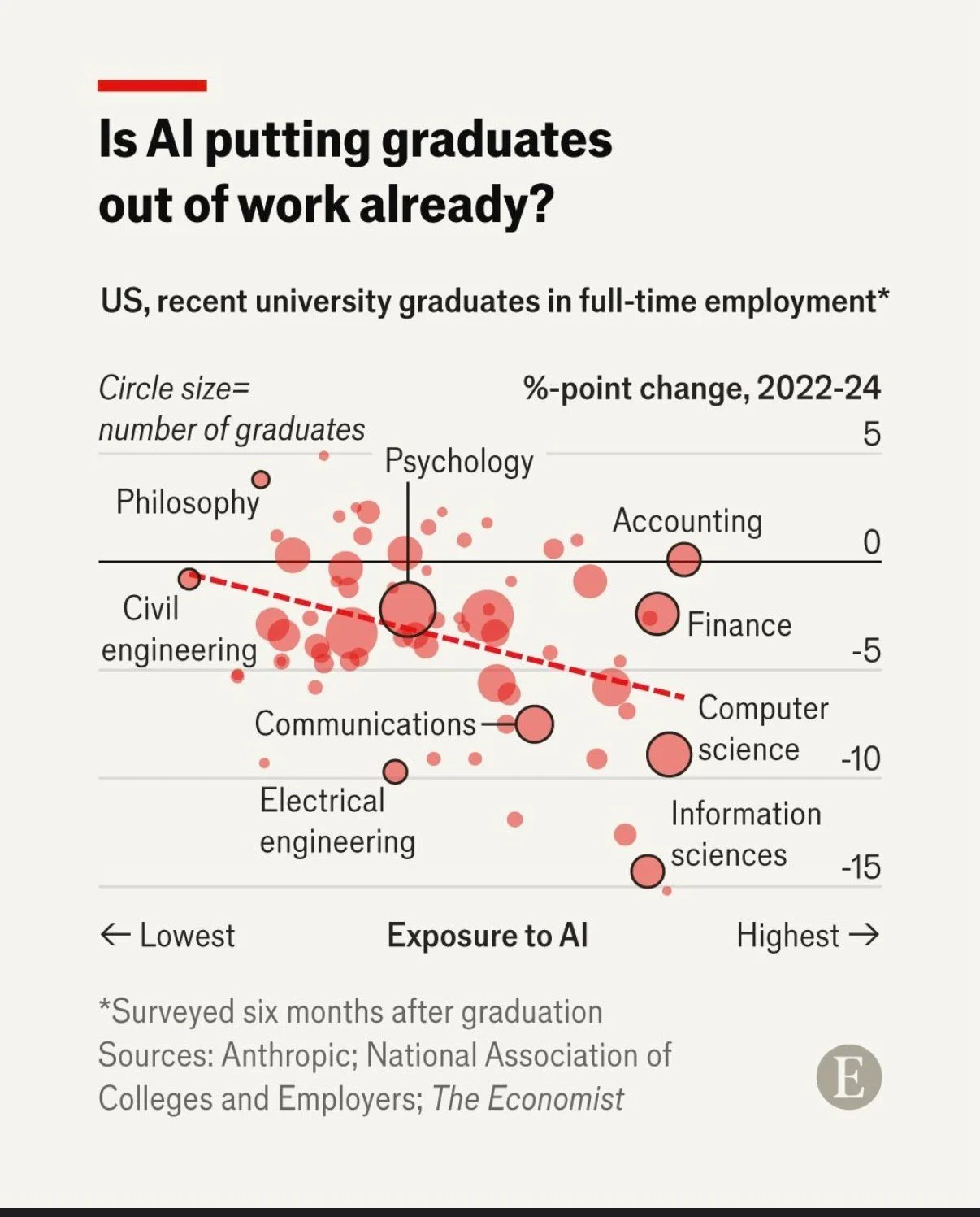

A chart developed by The Economist and posted on LinkedIn provides a good illustration. They use a bubble chart to show the relationship between three characteristics of recent graduates in different fields of study: 1) how exposed the field is to AI, 2) the change in the percentage of graduates in full-time employment between 2022 and 2024, and 3) the number of graduates in that field.

That packs a lot of information into a concise format for an audience that is comfortable with charts and graphs. Given the nature of the content it publishes, it seems safe to assume that includes most readers of The Economist and its LinkedIn followers. Such viewers are likely to be able to assimilate information about the three dimensions simultaneously.

In contrast, bubble charts can be very challenging for more general audiences to understand. Without a lot of explanation, and possibly a more gradual build-up, they may miss or misunderstand some of the information being communicated. The same is true of other more complex chart types, such as box and whisker or charts with two different y-axes.

Lesson: Complex chart types can reward data-savvy audiences but may confuse general ones.

To explain or not to explain

With its three dimensions’ worth of information, the Economist’s chart reveals interesting patterns with the help of some subtle cues. The solid black horizontal line separates fields in which more graduates were employed in 2024 than in 2022 (above the line) from fields in which fewer graduates were employed in 2024 than in 2022 (below the line). The downward sloping dashed red line shows the overall trend amongst all of the fields with the fields seeing the greatest drop in employment tending to be those that have the greatest exposure to AI. Scanning all of the bubbles with the help of those cues and the labels provided, we can see that computer and information science appear to be both among the most exposed to AI and among the fields that saw the greatest drop in employment of recent graduates between 2022 and 2024.

While all of that is interesting, the visualisation doesn’t say any of that, nor does the LinkedIn post in which it featured. The visualisation simply poses the question: ‘Is AI putting graduates out of work already?’ For a data-savvy audience, such as readers of The Economist, that creates an interesting puzzle to be solved. The chart gives them the information needed to solve it, and they have the skills to do so.

With a different audience, much more explanation would be needed to help them see how the chart answers the question posed. For example, they might not intuitively grasp the purpose of the dashed line or the meaning of the pattern of the bubbles. They also might not understand why the vertical axis shows the change in employment rather than just the employment rate.

You might be thinking: why not just explain everything all of the time? It does make sense to err on the side of explanation when you’re not sure about an audience, but too much explanation to an expert audience can easily come off as boring or condescending. It can also deprive them of the pleasure of finding the patterns themselves.

Lesson: More sophisticated audiences require less explanation.

This visualisation is a Good example of data communication for this audience — but with a different audience, the same chart could easily be Bad. Like knowing when not to explain a joke, knowing when not to explain a chart is a skill — and it starts with knowing your audience.

Get to the point

In one of the courses I teach for Wellington Uni-Professional, I have participants do an exercise where they critique an example of a data-intensive report (or other form of data communication) created by someone else. Over many iterations of the exercise, the most common negative critique relates to length. The examples are often reports that are hundreds of pages long, which are, unfortunately, extremely common in the New Zealand public sector. Participants rightly observe that few, if any, people are likely to want to wade through all of that and the length of the documents makes it hard for people to find the information they really want and potentially unlikely to even bother.

A recent information release from Statistics New Zealand illustrates the benefits of carefully curating data communications to focus on only what is essential and then to present that in a highly digestible way.

Less is more

In the course, we speculate about why the examples we are critiquing ended up being as long as they are. Theories typically include the authors erring on the side of including information just in case someone wants it, wanting to show all the analysis that they did, and not leaving enough time before a deadline to do a careful edit.

Whatever the reason, counter-intuitively, more information in a report, presentation or dashboard often leads to a worse experience for the reader or viewer. It makes it hard for them to find the information that they’re looking for and makes it more likely that they won’t try or will be unsuccessful even if somewhere, hidden in all of that material, are insights that would be very valuable to them.

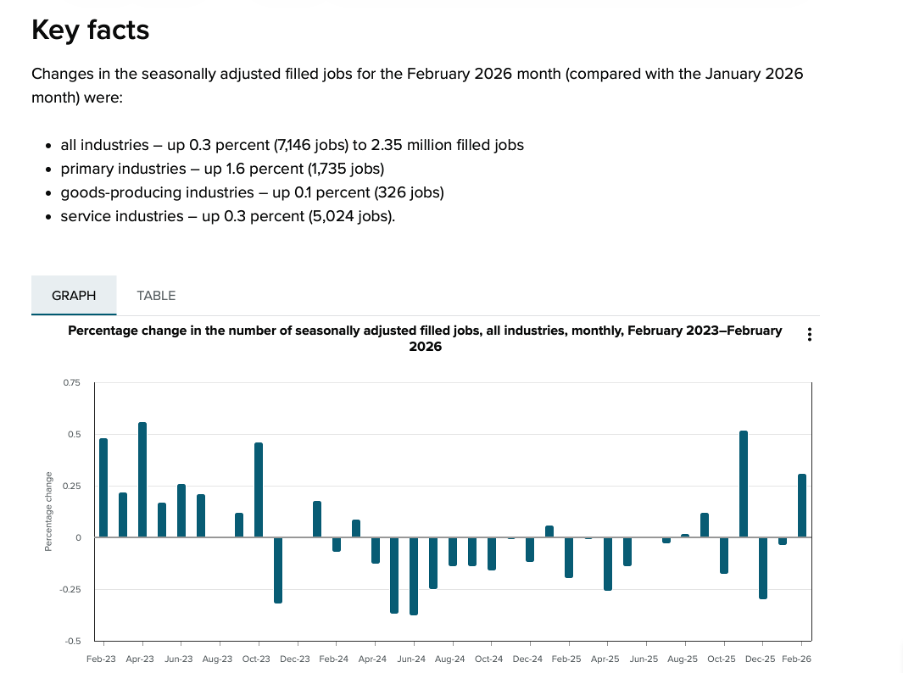

Providing an overly long report or other form of data communication essentially outsources the curation and editing work to the reader or viewer when it should be the responsibility of the author. Statistics New Zealand’s Employment Indicators for February 2026 provides a good illustration of how concise a valuable data-intensive output can be when the authors take that responsibility seriously.

Image reproduced for purposes of education, criticism and commentary.

In just a page or two’s worth of words and numbers it provides a quick overview of changes in the number of filled jobs in New Zealand over time (as shown above), and breaks down changes by industry, region, gender, and age over the previous month (as shown below). It also shows changes in jobs as both a percentage and an actual number of jobs. That’s enough for most people to gain a clear understanding of recent trends in the job market for a very small investment in time.

Lesson: Carefully consider what information is essential and provide only that.

Image reproduced for purposes of education, criticism and commentary.

Start general then move to specifics

In addition to providing a good illustration of carefully pruning back to the most essential information, the Statistics New Zealand example also demonstrates a general pattern for how to summarise information well, and that is to start at a high-level of aggregation and then break things down into more granular detail.

In this example, that means starting with the percentage change in total filled jobs by month and then subsequently showing changes in the prior month by industry, region, gender, and age. Starting with that high-level view lets readers or viewers orient themselves first and then see how specific industries, regions, or groups vary from broader trends (such as the relatively large drop in jobs filled by 15-19 year olds in this instance). It also means that someone who only cares about the high-level view can stop once they’ve seen that.

Lesson: Start with the high-level view, then break things down into greater detail.

What are my options?

While many people may only want the high-level view, and most will be very pleased with a concise, well-curated version of the story you are telling with data, some may want additional information. That might include additional methodological detail, even more granular cuts of the data, or to see data in an alternative format.

As the Statistics New Zealand example shows, in today’s environment it’s easy to give readers and viewers the option of accessing that type of information via links to additional details, data, and information. In this situation, that includes links to download the actual data, to see definitions and metadata, and to show the time series data as a table rather than a chart.

This approach is convenient for people who, for example, may want to download data to do their own analysis rather than just looking at it, people who want to better understand particular aspects of how data was collected and analysed, and for those using technologies designed to aid accessibility.

Lesson: Provide options to access more information or alternative forms of the same information.

We’re living in a time when attention is at a premium, and it’s important to reflect that in the data products that we create. Take a little extra time to carefully curate your data communications to make sure that they contain only the most essential information, begin with higher-level insights then move to more granular ones, and give users who want more detail options for getting it. If you do, your report, presentation, or dashboard may end up being one of the positively critiqued examples in my classes or in this blog.

On average, considering variation leads to better decisions

Garrison Keillor describes the people of his fictional town, Lake Wobegon, by saying "All the women are strong, all the men are good-looking, and all the children are above average." While the last claim is impossible and the first two are unlikely, even for a fictional town, the statement illustrates our tendency to focus on aggregate statistics such as totals and averages when we summarise things.

Aggregate statistics are important, but if you only examine overall measures such as those without also checking the extent and nature of underlying variation you may miss important pieces of the puzzle you are trying to put together. That’s particularly the case when what you are describing is less homogeneous than the people of Lake Wobegon.

A recently released report on community engagement done by the New Zealand Transport Agency (NZTA) regarding proposed changes to how State Highway 1 travels through Wellington demonstrates the value of looking into the extent and nature of variation rather than just at aggregate statistics. It does that by breaking down the data a variety of ways using a consistent chart type to make it easy to compare different results to get a clear understanding of the overall situation. Whatever your opinion about the changes proposed for State Highway 1, the report is an example of good data communication.

Aggregate measures sometimes don’t show the full picture

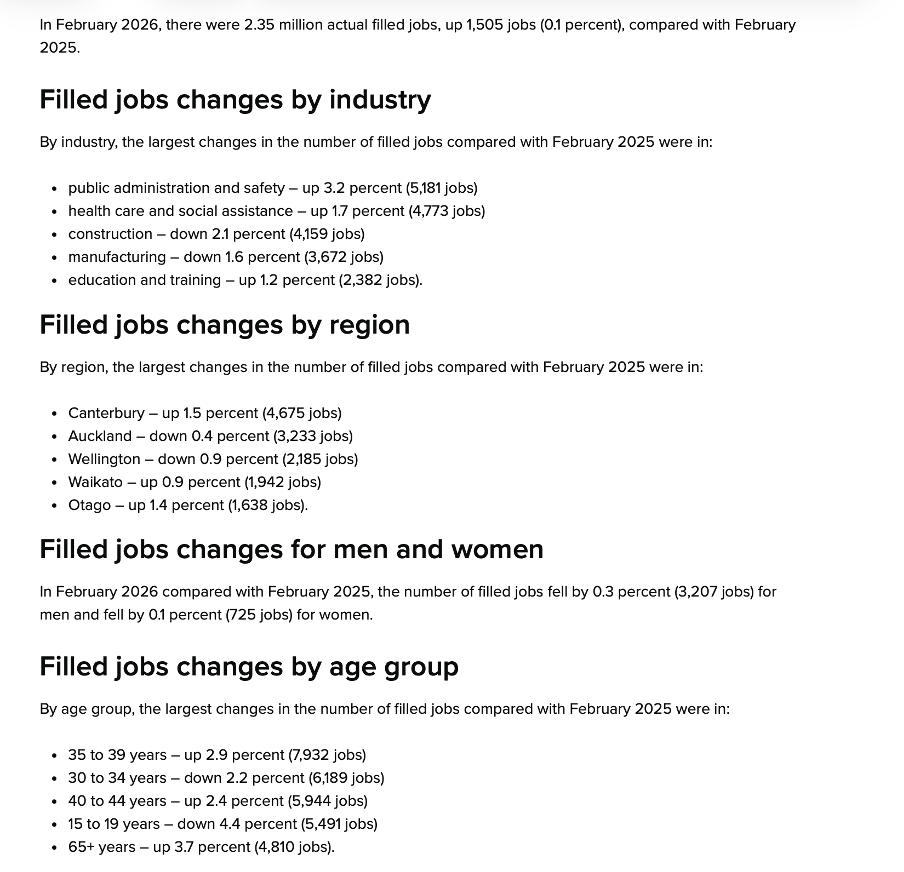

The report summarises results of a survey that asked about five specific changes being considered to the portion of State Highway 1 that runs through Wellington City. Community members who took the opportunity to provide feedback were asked to indicate how they believe the changes overall, and each change individually, would affect them personally and the Wellington region more generally.

Results for each of those two measures for the full set of changes collectively are shown in aggregate in Figure 5-1.

Image reproduced for purposes of education, criticism and commentary.

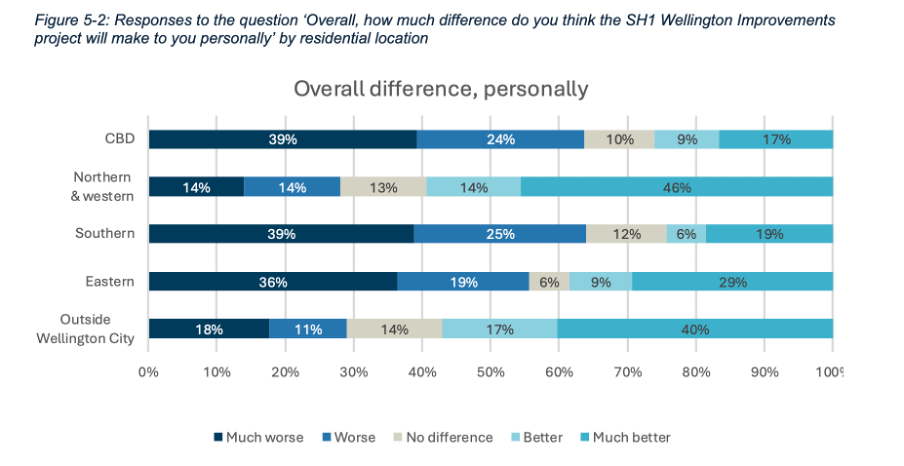

Importantly though, the report also includes the results for each measure based on where survey participants live, as shown in Figure 5-2 below for how survey respondents think the whole programme of changes would affect them personally.

Image reproduced for purposes of education, criticism and commentary.

If we only looked at the first figure we would get a sense that the community is somewhat evenly split on whether the project overall would make things better (41%) or worse (49%) for them personally, but by examining the more granular geographic breakdown of results it becomes clear that the people who believe the changes would make things better disproportionately live outside of Wellington City or in the northern and western suburbs while the people who believe the changes would make things worse for them live in the CBD and the southern and eastern suburbs.

This is important information for policy makers to consider given the changes proposed would occur in the CBD and the southern and eastern suburbs. In other words, the people most likely to be most directly affected by the changes during and after implementation were disproportionately likely to believe the changes would make things worse for them personally.

In this situation a lot of variation in attitudes toward the proposed changes can be explained by where people live, but characteristics such as age, gender, and income, may account for variation in other metrics. It’s always worth checking for such differences rather than just focussing on aggregate measures such as totals and overall averages when using data to make decisions.

Lesson: Aggregated results may hide a lot of variation across different characteristics. Check for such differences, and when they exist show and explain the variation as well as the aggregated results.

Make comparison easy

Previous posts have shown examples of inconsistency in how data insights are communicated. This report shows the benefit of consistency when it comes to choices such as the type of chart, the order of data series, and the colour scheme.

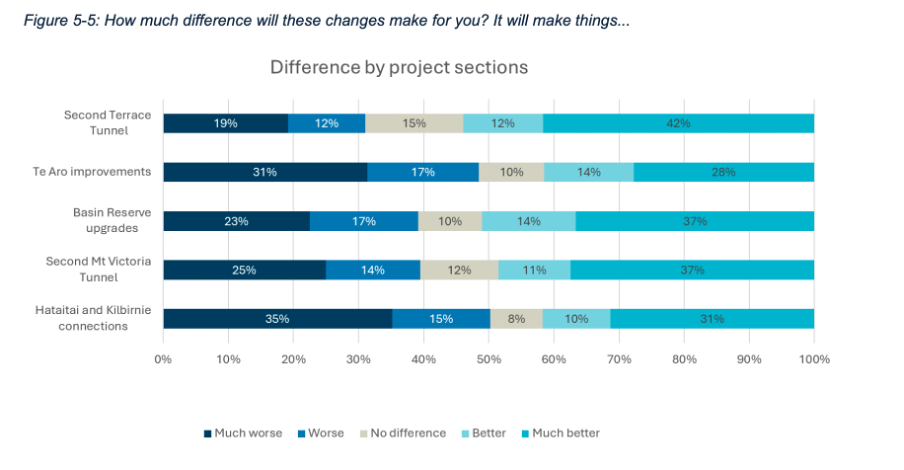

Both Figure 5-1 and 5-2 use stacked bar charts, ordered to show responses from much worse to much better, and with the same colours used to represent each possible response option. Similar charts were used to show perceived personal and Wellington-wide effects toward each change considered. For example, Figure 5-5 below shows how responses varied for each change under consideration.

Image reproduced for purposes of education, criticism and commentary.

A stacked bar was a good choice for this data because the response options for each question are mutually exclusive and some labels for different projects and locations are long. Having selected an optimal chart type for the situation, keeping everything else constant makes it very easy to make comparisons between charts as well as within them. It means that someone reading the report can concentrate on how attitudes change depending on where a person lives or what part of the project is being considered rather than forcing them to waste mental bandwidth trying to orient themselves around each new chart because it’s designed slightly differently.

Lesson: Using charts of the same type and with the same order and colour scheme helps facilitate comparisons

Unlike the fictional, reportedly highly homogeneous Lake Wobegon, variation is common in the real world and the data that represents it. Understanding that variation rather than focussing exclusively on aggregate metrics won’t make decisions such as whether to change a state highway easy, but it will, on average, result in better decisions.

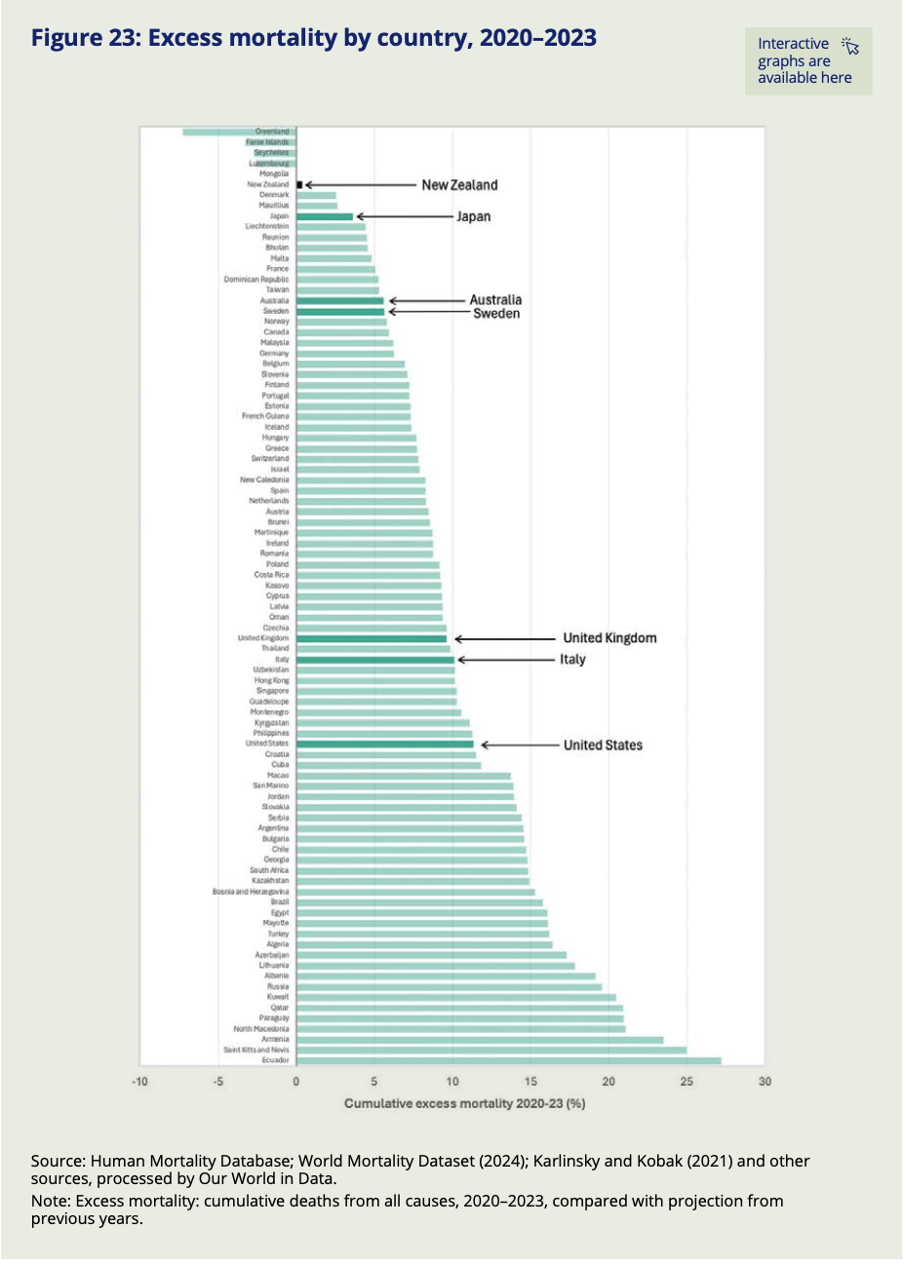

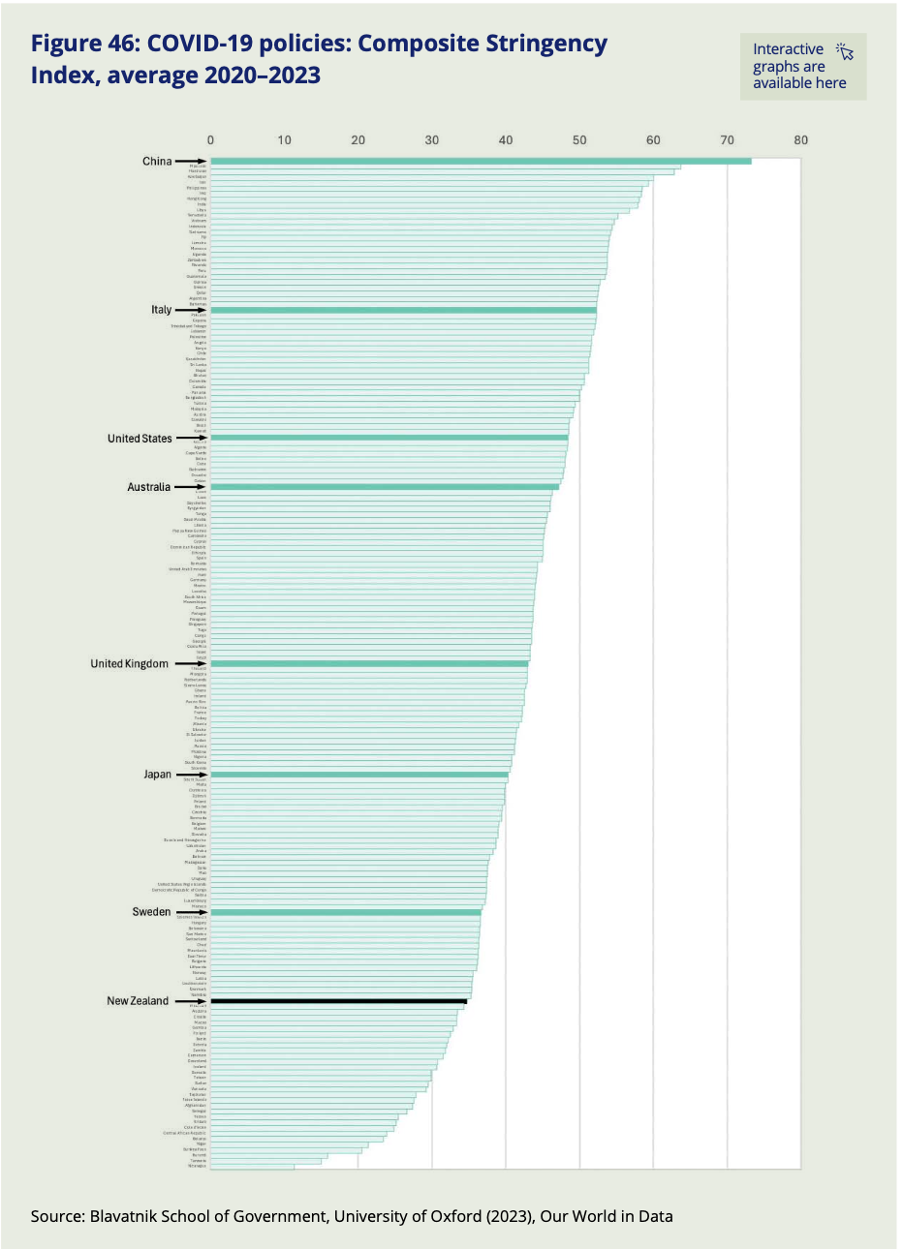

Clearly communicating when conclusions may be complicated or contentious

Only five out of the eighty-nine countries and regions included in the World Mortality Dataset had fewer excess deaths than New Zealand over the pandemic period. That might seem unremarkable to people who lived through New Zealand’s lockdowns or to those elsewhere who read about them — except that, indexed across the full period from 2020 to 2023, New Zealand's Covid restrictions were actually less stringent than those of almost every country it is typically compared to, including Sweden, the United Kingdom, Australia, Italy, and the United States. Whether that finding surprises you or confirms what you already suspected, you don't have to take anyone's word for it. The data is right there for you to check yourself — and that is no accident.

It’s part of a recently released series of reports from the New Zealand Royal Commission examining lessons learned about the country’s experience with Covid 19. The pandemic and its aftermath are things that all adults experienced and nearly all have opinions about. It also influenced and was influenced by a complicated web of public health, economic, and legal policies and practices. In situations like this, where the data and related analysis are complex, and many people have strong opinions about, or vested interests in the conclusions, the stakes and potential for a contentious reception are high.

In communicating the results of its investigation into this period of New Zealand’s history to provide lessons to guide responses to pandemics the country may face in the future, the Royal Commission also did two things that provide important lessons for those trying to communicate data-intensive insights in the future.

Divide and conquer

Somewhat unusually, the final outputs from the Commission’s work were delivered in not one, but three reports. The main report is mainly text that systematically lays out conclusions and recommendations about different aspects of the pandemic response. Two separate reports summarise submissions made to the Commission by the public and provide a curated collection of relevant publicly available data.

The one summarising the submissions includes some photos of, and direct quotes from the people who made submissions, showing how the pandemic affected them personally. The data-oriented report includes many charts and graphs showing how New Zealand compared to other countries on a variety of measures and also tracks changes to various measures before, during, and after the pandemic.

The information in those two separate reports inform the conclusions and recommendations in the main report, but each output is hundreds of pages individually, so combining them without removing any content would have created a document of over one thousand pages. Even the most interested parties are unlikely to want to read that.

Faced with a situation like this, it helps to split things up. In some cases that might mean dividing content by topic. For example, in this case maybe separating the health response from the economic response from the legal response. Those things were all inter-twined though, so it makes sense that’s not the option that the authors chose.

Another common way of dealing with this type of problem is to make different reports for different target audiences. In this situation that might have meant one report for policy makers and another for the general public. While that’s often a good solution, the pandemic is a rare situation in that it’s one where anyone reading anything about it is likely to have some direct personal experience with it, and yet there is probably no one who is an expert in all aspects of it. Health professionals don’t understand the nuances of the economic issues that had to be addressed and vice-versa, and everyone, regardless of their professional perspective, also had personal experiences.

Splitting the content the way the Commission did made it easy for politicians and policy makers to focus on the main document to consider possible lessons for the future (and to try to attribute blame for past decisions). It also demonstrated that the Royal Commission listened to and heard the many people who made submissions to it, which is particularly important given how intense, and often sceptical, feelings around the pandemic are. Finally, it allowed people who want to dive into the detailed and comparative data to easily find that. We will look more closely at some of that data ourselves next.

Lesson: If you have a lot of data-intensive information to communicate or you are trying to communicate data-intensive information to multiple audiences with different needs, consider using multiple outputs rather than trying to create one that is intended to be everything for everyone.

Anticipate assumptions, hypotheses and objections

Focusing now on the data-oriented ‘Covid by the Numbers’ supplementary report, we can see another smart decision. That was to make it easy for people to check their own assumptions, hypotheses and objections against the actual data.

People looking at any sort of data-oriented output often have their own views about the phenomena being examined. That’s certainly true of Covid. In some cases the views may be implicit assumptions, but in others they may be explicit hypotheses about how one thing affected another. In this and other situations, we can think about our audience’s assumptions and hypotheses and anticipate objections they might have to insights being presented. I think of these as the ‘yeah, but…’ thoughts that form in people’s minds as they watch a presentation or read a report.

We can leverage our understanding of an audience’s assumptions, hypotheses and objections in constructing data-oriented outputs. The idea is that almost as soon as the ‘yeah, but…’ thought forms in the minds of the viewer or reader the next slide or the next page of the report provides the information needed to answer that question or address that concern.

In the case of Covid, a common type of ‘yeah, but…’ thought is likely to relate to other countries. For example, ‘Yeah, but Sweden didn’t have so many rules, and not that many people died there.’ Or ‘Yeah, but people in the UK were much freer to live their lives.’ The ‘Covid by the Numbers’ supplementary report addresses concerns such as those with a series of charts providing comparative data across many countries and highlighting the exact countries that are most likely to feature in ‘yeah, but…’ thoughts.

For example, Figure 23 shows excess mortality by country (going from least to most excess mortality, and also explaining what excess mortality is in a note at the bottom) and Figure 46 shows the stringency of Covid policies (going from most to least stringent, though it might have been better to always have the most desirable end of the scale at the top). Both use highlighted bars along with arrows and larger labels to make it easy to find comparator countries most likely to feature in ‘yeah, but…’ thoughts and therefore make it easy for readers to test their own assumptions, hypotheses and objections against the actual data.

Image reproduced for purposes of education, criticism and commentary.

Image reproduced for purposes of education, criticism and commentary.

Lesson: Consider implicit assumptions, explicit hypotheses, or possible objections your audience is likely to have about the data you are trying to communicate and make it easy for them to test their assumptions, hypotheses and objections against the actual data.

Everyone had their own experience of the pandemic, and everyone is likely to have their own reaction to these reports — including to the findings shown in this post. Whether New Zealand's combination of near-best excess mortality and, indexed over the full pandemic period, relatively low policy stringency strikes you as expected, surprising, or still not the whole story, the Commission's choices mean that you are not left arguing from memory or anecdote. The data is there, clearly laid out, and deliberately designed to let you test your assumptions against the evidence. That is exactly what good data communication makes possible — and exactly what we can aim for when we need to communicate clearly about things that are complicated or contentious.

Tables may not be flashy, but they’re often very useful

When faced with data-intensive insights to communicate, common mistakes are to go for a cool new type of chart you’ve recently seen, to include a variety of different visualisations to ‘mix things up’, or to rely too heavily on a single type of visualisation.

It’s helpful to think of the best way to communicate insights derived from data the same way a tradesperson might think about their tools, and select the right one for the job at hand. While they may not be the flashiest tool in the data toolbox, tables are often a good visualisation option, and can be the best one.

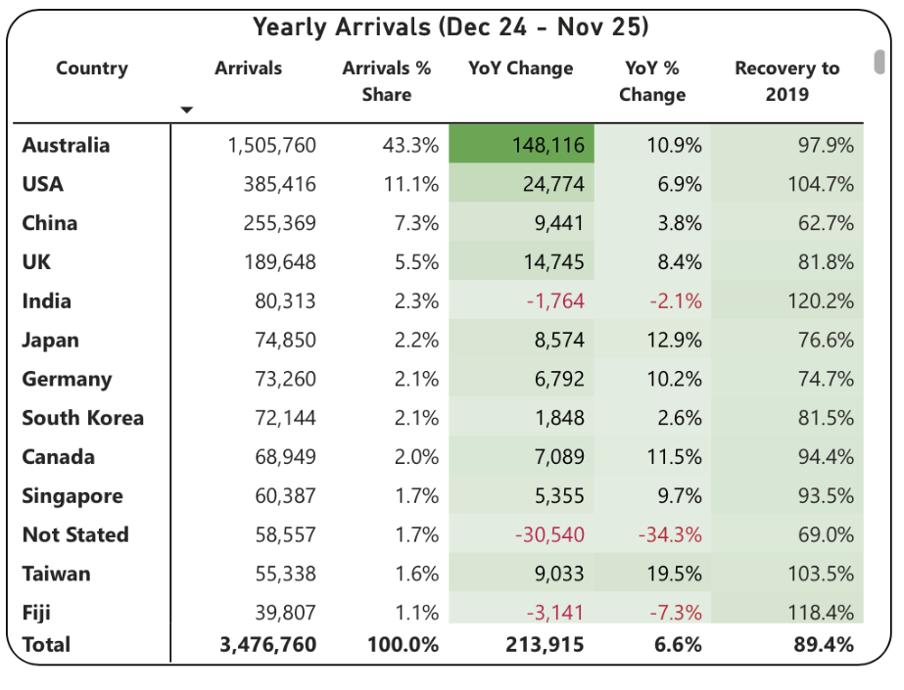

This example from Tourism New Zealand helps illustrate why that’s the case. The table shows data related to international visitors' arrivals in New Zealand. It is part of an interactive online dashboard and includes many more countries and territories than can be seen in this screenshot (237 in total). Data for the other countries is visible if you view the data online and scroll or download it.

This is good data communication because it aligns the visualisation type with audience needs.

Why tables work well when audience interests vary, and there are a lot of possible ways of aggregating and showing the data

If the target audience was only interested in the total number of visitor arrivals, that could easily be shown with a different chart type, such as a line chart showing arrivals over time; however it’s easy to imagine many reasons why people might want to be able to see the disaggregated data for specific countries. Something like a line chart, scatter plot, or bubble chart showing each country or territory individually would be far too cluttered and make it hard to discern precise values for specific countries.

Showing the data in this table format makes it possible for viewers to easily see the data for the countries that are of interest to them. That might vary depending on whether the user is a government policy analyst, someone working for an airline, the owner of a specific tourism-focussed business, etc.

Lesson: Consider what type of data communication will create the best user experience. When there are many possible cuts of the data and different audience members are interested in different aspects, tables often work better than charts.

Smart design choices reduce cognitive load

Good tables aren’t just about showing numbers in rows and columns. Notice the conditional formatting in this example: green shading shows year-over-year increases, with darker shades indicating larger gains. Australia’s substantial 148,116 increase appears in the darkest green. Red text flags decreases, such as the drop in visitor numbers from India and Fiji.

This formatting follows data visualisation conventions that most viewers intuitively understand: red typically signals decreases or concerning values, green indicates increases or positive values, and darker shades represent greater magnitude. These conventions reduce cognitive load - users don’t need to learn a new visual language for each dataset but can instead focus on the insights revealed from the data. Such formatting also lets users quickly spot patterns and outliers without needing to manually compare numbers across rows.

While it’s not obvious from the screenshot, clicking on the titles of any of the columns in the table enables you to sort on that metric and the dots in the upper right corner lead to more options, including filtering. Those features enable users to see the data in the way that’s most helpful for them with minimal effort. For example, a capacity analyst at an airline might want to sort the data as they are by number of arrivals. In contrast, a policy analyst documenting the effectiveness of covid recovery policies might sort on the final column or someone with a tourism business focussing on visitors from just a few countries could filter the data to show only those countries.

Lesson: Good design reduces the work viewers must do. Features like conditional formatting should help users spot patterns instantly, while sorting and filtering capabilities let users organise data for their specific questions rather than forcing them to search through irrelevant information.

When tables work - and when they don’t

For all their strengths in an interactive dashboard context like this one, tables are normally much less effective in a presentation setting. Imagine projecting this table in a conference room. The font would be too small to read if you showed all countries at once. If you enlarged the font and spread the data across multiple slides, audiences couldn’t easily find the countries of greatest interest to them unless the countries were arranged in alphabetical order. But alphabetical ordering would obscure the patterns revealed by metric-based sorting. The difference comes down to how people interact with the data. Dashboard users can sort, filter, scroll, and spend time with individual data points that matter to them. Presentation audiences are passive viewers who see whatever the presenter shows them, in whatever order, for however long the slide stays on screen. Different contexts demand different approaches.

If the data had to be communicated in a presentation, it would be better to focus on the more aggregated data and the overall trends and patterns in that, and then distribute a detailed table via something like a handout or a link. A link would make it possible to offer the types of interactivity described previously, but even in a handout the data could be shown in different orders (e.g., alphabetical, greatest to fewest arrivals, greatest year on year change, etc.) to aid usability.

Lesson: Context determines effectiveness. The same data often requires different visualisations for different viewing situations. Design for how and where your audience will actually view the data.

Choose the right tool for the job

As will be discussed in subsequent posts, there are many things that can be done to polish data visualisations and other communications, but the starting point should be choosing the right type of data visualisation for the audience, the data, and the delivery context. Tables may not be the flashiest choice, but there are many situations where they are the right tool for the job.